Leaderboard

-

comment

Members2,169Points32,115Posts -

Wim Decorte

Members512Points5,935Posts -

eos

Members226Points1,600Posts -

Ocean West

Administrator206Points4,485Posts

Popular Content

Showing content with the highest reputation since 12/04/2010 in all areas

-

Anatomy of a Good Topic

9 pointsThis post has been incorporated in to our site's guidelines. https://fmforums.com/guidelines9 points

-

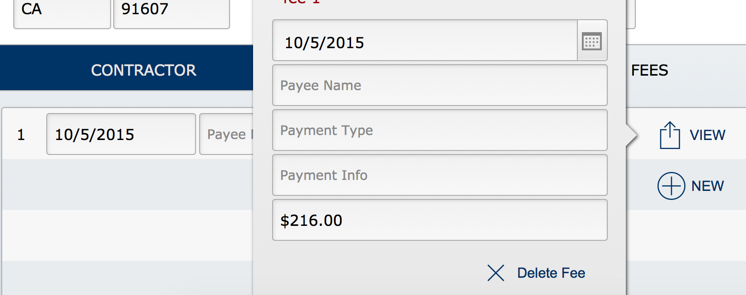

Title Footer

6 points

- 167 downloads

- Version 1.0.0

A single table might have up to four layouts to present its data: List view (for quick reviewing of records) Print view ( a black & white view for printing) Search form ( holding all the fields in the table Users can search) Detail view (form layout used for editing a record) We've all known for years that we can hide things in sub-summary parts and only display them if properly sorted but now in 14, you can potentially use a single layout for list , searching and editing. In 14, navigation bars allow scrolling in form view and we can take advantage of this by using the Title Footer (or Title Header) to hold our 'form'. A simple switch from list view to form view can provide Users all the fields they need. The title footer can hold portals, tab controls and anything else you would place on an editing or find layout. Related records placed in the Title Footer are not fetched until switching to form view so they have no weight until displayed (just like popovers, tab controls and sliders). In the attached sample, layout triggers assist by switching to form view if in find mode and switching back to list view after a find. I have hidden the list view fields and labels when in form view to simplify the view. The benefits? Less layouts in a solution Light weight No need to switch layouts (which might fire triggers) Endlessly scrollable form view (not limited like popovers and sliders) Potential issues? Yes. The header is only one line tall in my sample (holding the list's labels). If you need a vertically-tall header for the list view then this approach might provide too much 'unavailable' space at the top. And switching the process later would not be simple task. I hid the header labels and fields in the list which makes it a bit more work to design (although minimal). You might wish to let them display and only provide additional fields below. This is just another useful, simple tool for our toolkits. BTW, I have specified 'not applicable' on the FM version because 14 hadn't been added as an option yet. Also, this new forum software doesn't allow a title or subject - only accepts a valid file name.Free6 points -

interface check

5 points

-

enter into valuelist

5 pointsYa know, when you rate people with negatives, they will stop assisting you. Wouldn't you in their place? Why should we risk responding just to get slapped for it if you simply don't care for our response? You are judging whether our answers are right or wrong and you know very little about FileMaker. Would you let a podiatrist take out your gall bladder? No - you listen to those that know what you want to know. Soon, at this rate, you will have nobody left to help you. By the way, I am not responding ... I'm afraid to. Also, don't tell people to read the post again ... that is insulting. People here READ the posts. Your posts have been almost impossible to understand at all. That is not our fault. It is yours.5 points

-

Creating a separation model from an existing solution

No, you can't have pure separation. And yes, you might have to make more changes in the data file if your solution is still young (and occassionally throughout) but that is easily off-set by time saved not migrating. I've designed some large systems using both separation and not. Here are the reasons that *I* prefer using separation and these reasons probably aren't the top reasons at all - but they are important to ME: Transfer of Data (the best solution is NOT moving data) If you use single file and design in another version (so Users can continue working in the served version) you have to migrate the data from the served file to your file and that can take hours even when scripted. Every time you move the data, you risk something going wrong and the likelihood increases each time you migrate. With separation, the data file remains untouched. Import Mapping (unsafe and time consuming) Scripting the automatic export and import on every table in the single file solution takes time as well. Every export and import script must be opened and checked because you have added (and probably deleted) fields. You can make a mistake here. And even if you don’t, the 'spontaneous import map' bug might get you. It is very time-consuming and difficult, dragging a field around in the import maps (FM making it very difficult with the poor import mapping design). No data export/import or mapping with separation. Data Integrity (and User Confidence at risk) When working in a single served solution, you risk records not being created when scripts run (if you are in field options calculation dialog), triggers firing based upon incorrect tab order that you just changed on a layout, and a slew of insidious underground breaks most that you will never see until much later. By then, all you will know is that it broke but not why. You risk jangled nerves and lost faith of your Users who might experience these breaks, data loses, screen flashes and other oddities. A User’s faith in your solution is pure gold and it should not be risked needlessly. External Source Sharing (provide ‘fairly’ clean tables for integration needs) It has been discussed many times … why we have to wade through a hundred table occurrence names (particularly if using separation model anchor-buoy of which I'm not a fan anyway) and also have to view hundreds of calculations (which external sources don’t want). It can be a nightmare. Much better with a data file – where calculations are kept to minimum and the table occurrences are easily identifiable base relationships and easy to exchange with MySQL, Oracle and others. If FM made calculations another layer (separate from data) then FileMaker would go to the top instantly … rapid development front-end and true data back end. But I digress … Crashing (potential increases when Developing in live system) Working in developer mode (scripts with loops, recursive custom functions), means that there is higher likelihood of crashing the solution when designing - admit it. If you crash the solution while in the schema, it is (usually) instant toast but regardless, you SHOULD ALWAYS replace it immediately (run Recover, export the data and import it into a new clean clone). With separation model, you never design live so it is moot. No trashed file means no recover and no migration. You are designing as we all should … on a Development box. Transferring Changes (even if careful) Working in live single file system, a change you think is small might have unseen and unexpected side-effects. That potential danger increases as the complexity of your solution grows. This is why we all should beta test changes before going live. But even if careful and even if you design on Developer copy and test it, document your changes and then re-create those changes into the served file (eliminating the data migration portion completely), you risk mistyping a field name, script variable, not setting a checkbox and so forth. Separation also can risk error because it can mean adding a field or calculation to the Data file but it is very small percentage of the changes we make (except very early); most changes are layout objects, triggers, conditional formatting…UI changes. It is more difficult to replicate layout work between files. And most changes in the data file occur before the solution is even made live the first time. Same Developer experience (Developer works in single file regardless) In separation, all layouts and scripts reside in the UI no matter how many Data files are linked as data sources. The only time you have to even open the Data file is to work in Manage Database. Added ... or set permissions. Fewer Calculations (means faster solution?) If one decides to go with Separation, the goal is no calculations or table occurrences in the data file but that goal is not obtainable. Since this is your goal, you try to find alternate methods to achieve the same thing to avoid adding calculations (which you should do anyway). You will use more conditional formatting, Let() to declare merge variables, rearranging script logic to handle some of it … there are many creative options. And once you start down that path, you will find that you need fewer calculations than you ever imagined. Separation model means I can work safely on the UI aside and quickly replace it while leaving the data intact with the least risk. Some calcs need a nudge to update and privileges must be established in the data file as well – those are the only drawbacks I have experienced with it. I have never placed the UI on local stations. I have heard (from trusted sources) that it increases speed. There was a good discussion on separation model here: https://fmdev.filema...age/65167#65167 Everyone has their opinion. I did not want to go with separation and I was forced into it the first time (by a top Developer working with me, LOL). I am glad I was. Once I understood its simplicity and that everything happens in the UI, I was hooked. Of course if it is a very small solution for one person then I won't separate but for a growing business with data being served, I will certainly use it. Next time that, in your single-file solution, you go through a series of crashes because of network (hardware) problems and you have to migrate every night, think about separation. Next time you need to work in field definitions but can't because Users are in the system, think about separation. Most businesses are constantly changing and that means almost constantly making improvements to a solution. You can NOT guarantee that changes in Manage Database or scripts will not affect Users in the system. Risk is simply not in my dictionary when it comes to data. Enough can go wrong even when we take all precautions. I don't dance with the devil nor do I run with scissors. Joseph, I think it would be easier to start from scratch because it makes you re-think each piece. And, as you learn, you will find better ways of re-creating each portion of it. I would also wait until the next version arrives to take full advantage of its new features. It won't be long now.5 points

-

Changing Dimensions of Layout Parts Dynamically

Actually, there is a way to measure it - but it takes quite a lot of work... MeasureBeforeDisplay.fmp124 points

-

A Forward Look About FileMaker Platform Security

A Forward Look About FileMaker Platform Security Developers and users of the FileMaker Workplace Innovation Platform must be concerned about security of their deployed solutions. Likewise, they must have a forward-looking perspective about key issues in this arena. Security has its major purpose the preservation of Confidentiality, Integrity, Availability, and Resilience (CIAR) of their systems. Liabilities resulting from breaches can substantially affect continued business operations, continued business existence, imposition of civil or criminal sanctions, brand reputation, and customer or client confidence. I see at least ten security concerns that the FileMaker Developer Community must consider going forward for the next few years and development cycles: The Business of Security: What Is Security Supposed To Do? Zero Trust implementation for the FileMaker Platform [https://fmforums.com/blogs/entry/2047-federated-identity-management-zero-trust-and-the-filemaker-platform/] Federated Identity Management and the end of FileMaker Accounts in files Native Multi-Factor Authentication (not SMS) Further implementation of Secure by Default and Rule of Least Privileges for the FileMaker Platform Expansion of Roles-Based Construct in the FileMaker Platform SaaS Security Implementation for the FileMaker Platform Building a Culture of Security in the FileMaker Developer Community Building a Culture of Security among the FileMaker Customer Base The Coming Regulatory and Political Onslaught Against the Tech Sector So as we go through the just-started FileMaker, Inc. Fiscal Year running up to the next version release and the 2019 DevCon, we should keep these elements in mind. Steven H. Blackwell, Platinum Member Emeritus, FileMaker Business Alliance4 points

-

SVG Icon Manager

4 pointsHey everyone, I love how open the Filemaker Community is, and how much is shared freely, so I wanted to contribute. I created a database to manage SVG icons. The icons are all royalty free provided by Syncfusion and there are about 4,000 icons, all with search tags to make finding them super easy. You can find out more about the file, and download it here: http://www.indats.com/2015/06/15/indats-icon-manager/ Please let me know any feedback you have on the file, thanks!4 points

-

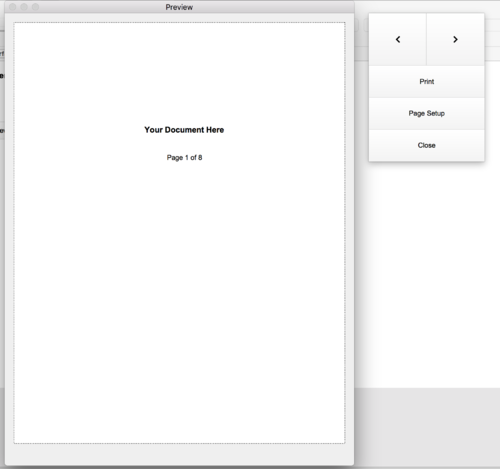

FM16 Card Style Window Preview Mode Action Button Pallet

- 327 downloads

- Version 1.0.0

This technique was inspired by @Claus Lavendt in a tweet. Now with offset new card style mode you can now create a tool pallet to interact with a document in preview modeFree4 points -

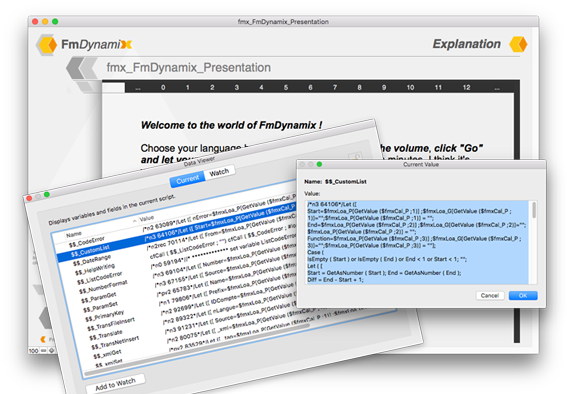

FmDynamix project - cfCall () and #load ()

Hi All, I am happy to present the FmDynamix project, including cfCall () and #load (), my new 2 custom functions .... In an interest to further test FileMaker more and more ... I tried to give you a presentation of this new method, as explicit as possible, I hope to have succeeded ! You have the description and the presentation here, and take before the file demo : fmx_FmDynamix_Presentation.fmp12 I hope that this file will give you the urge to see this method, I do not think I have view of similar thing I hope that this "modularity" will please you and will give some ideas, feel free to test and give me your comments ! Thanks for your interest Agnès ( and thank you to Google Translate too )4 points

-

SECURITY VULNERABILITIES OF FILEMAKER PLATFORM API’S: AN UPDATE

Security Vulnerabilities of FileMaker Platform API’s: An Update January 9th 2017 In an April 2016 entry on this BLOG titled The FileMaker Platform API’s Are Your Friends, Right? [http://fmforums.com/blogs/entry/1535-the-filemaker-platform-api’s-are-your-friends-right/] I discussed a number of FileMaker Platform security issues centered on the uncontrolled use of a number of external Application Program Interfaces (API’s). There are at least nine of these API, possibly more, if ExecuteSQL is included. The central thesis of that article was that these API’s provide unexpected attack vectors to compromise FileMaker Platform files. As noted at the time: Many FileMaker developers are not aware, however, that these API’s have the capability to access customer or client solutions in unexpected ways and to extract or insert data, to manipulate business processes developers embedded into these solutions, and to compromise the integrity of these solutions. Unfortunately, in the intervening nine-month time span, we continue to see cases where several of these API have been used for malicious purposes to compromise FileMaker Platform files’ business process integrity, to manipulate data, and to extract data. And many in the developer community remain unaware of this problem. In this BLOG entry, I will describe two of these API’s in greater specificity and detail, including describing a variety of attacks they can facilitate. This article will not discuss the ActiveX API that is available on Windows OS; however, developers should give similar attention to that approach. Developers need to be aware of these items in order to protect their files and those of their clients. The two API at the center of this focus are Apple Events and the FMPURL process. In the earlier article, I noted several elements about these that bear repeating here: [These API] cause particular concern because of their breadth and relative ease of use…. The Apple Events Suite has an extensive set of commands that can read and write data, read metadata, manipulate the UI, and trigger scripts. In addition, they can work outside the normal constraints found on layouts in a file. [http://thefmkb.com/5671] The FMPURL…can open a file and run a script in it. If the file is already open, then the script will still run. [http://thefmkb.com/5560] A few general comments about both of these API’s: · They are not platform-specific in the sense that just because a client organization is an all Windows OS environment that it is immune from an Apple Event attack. It’s the OS of the attacker that controls whether the API can be used. · There are some ways within Privilege Sets to constrain behavior of these API commands when they are applied on a file. The Export privilege bit can control the ability of Apple Events to extract data from a file. The Layout Access privilege bits can also constrain the ability to see contents of a layout. Likewise, Script Access privilege bits can control the availability of a script to either of these API. · These API often perform actions in unexpected fashions that fall outside the normal, traditional, and familiar FileMaker Pro User Interface behavior. This is part of what catches developers by surprise. —Apple Events— When a file is open, whether standalone or hosted by FileMaker Server, an attacker can send Apple Event commands to it causing it to perform a variety of actions, including: · Run any script to which the user has access, irrespective of whether that script is in the list of Scripts or whether it is attached to some UI element, such as a button. · Navigate to any Layout irrespective of whether that Layout’s name is in the list of Layouts or not. If the user’s Privilege Set has access to see that Layout, then its contents are visible whether the developer ever intended for the user to view the Layout or not. · Return various metadata about the file, including such items as Script Names, Value List Items, Layout Names, Field Names, etc. If a user’s Privilege Set does not allow access to the item, its name does not appear in the list returned. · Put data into any field in the database or extract data from any field, irrespective of whether that field is on the active Layout or is on any Layout for that matter. Here are several examples of these scripts, all working on a file named Our_Secret_Information.fmp12. tell application "FileMaker Pro Advanced" activate go to first layout end tell tell application "FileMaker Pro Advanced" activate do script FileMaker script "Relog_as_Admin" end tell tell application "FileMaker Pro Advanced" activate set somevar to name of every layout end tell tell application "FileMaker Pro Advanced" activate set somevar to name of every field end tell tell application "FileMaker Pro Advanced" activate set somevar to get data field "CreditCardNumber" end tell —FMPURL— The FMPURL command’s principal attack vector is that it can be used to run any Script in a file to which a user’s privileges has access. Similar to Apple Events, this occurs irrespective of whether that script is in the list of Scripts or whether it is attached to some UI element, such as a button. If the file is closed, the command first opens the file with supplied credentials, then runs any OnFirstWindowOpen script, and then runs the designated script from the FMPURL command. As a result of this behavior, a Halt Script step at the end of the opening script has the effect of blocking the running of the FMPURL designated script. Some developers have utilized this technique to block FMPURL calls to scripts in a file. However, if the file is already opened or if there is no opening script, then the designated script does run. Here is an example of calling a script, again in our file Our_Secret_Information.fmp12 being hosted at a server at IP address 0.0.0.0. fmp://0.0.0.0/Our_Secret_Information.fmp12?script= Relog_as_Admin —What Is the Significance Of This and How Do We Address This?— One of the many reasons we caution developers against embedding security elements such as Identity and Access Management controls into the data layer of FileMaker Pro databases is precisely because such elements are vulnerable to these API attacks. Think for a minute about that Relog_as_Admin script that presumably relogs into the file with a [Full Access] Account. If an Attacker can trigger that script and cause it to run, irrespective of what the developer might have intended, then the Attacker has full access to the file. This has actually happened. Or, suppose that a developer has made a “Developer_Only” layout in the file, removed it from the list of layouts, and left sensitive information on it. If the Attacker can navigate to that layout, and if it is not protected by settings in the Privilege Set, then the Attacker can learn the contents of the information on it. This has actually happened in numerous instances, including unbelievably, the appearance of [Full Access] level credentials left exposed on the layout! Likewise, suppose that a developer has made a so-called “Privileges Table” with various fields that purport to control whether a user can do such things as create records. Using the Apple Event Set Data command, an Attacker could likely change the values in these fields if they do not enjoy additional protection. More likely even, the Attacker could simply issue a Make New Record command and create the record. That is a process frequently used to thwart developer-imposed limitations on the number of records in a demonstration version of a vertical market solution. So, what can be done to manage this situation and to prevent these type attacks? In FileMaker® Pro 15, FileMaker, Inc. added a new Extended Privilege option in the Privilege Set called fmscriptdisabled. Developers must explicitly invoke this option; it is not a default option. What it does is to prevent Apple Events (Macintosh OS) and ActiveX commands (Windows OS) from activating scripts, just as the name implies. It has no impact on FMPURL or on other Apple Event commands that do not involve triggering of scripts. Some of the other items in a Privilege Set, notably Export and data layer modification elements, can control Get Data and Set Data Apple Events. If Export is disabled, then Get Data will not return data from the selected field. In tables where the editing privileges are restricted, likewise, Set Data will not add data to a field. Creation and deletion privileges behave in similar fashion. Remember, we are talking here only about Apple Events. Other processes may behave differently. Controlling API behavior is important; however, it is not the only security feature that developers must invoke to assure Confidentiality, Availability, and Integrity of their database systems. So, clearly what we need here is a way to block these API from interacting with FileMaker Pro files. FileMaker, Inc. is aware of these issues and has been working on new ways to address them. In the Product Road Map Webinar presented on November 30th 2016, FileMaker, Inc. noted that the next version of the FileMaker Platform will contain a number of additional security enhancements. I am authorized to say that one of those enhancements will be a new process for more closely and granularly controlling several of these API’s. At such time as there is any new version of the FileMaker Platform, I will have additional comments and analyses of the issues related to these API’s.4 points

-

image sync speed improvement

4 pointsI was running some speed tests with EasySync recently and was surprised to see how long a sync took after I added a few images to the the sample record set that ships with EasySync. It was taking over 5 min. to download those records from FileMaker server which was installed on the same machine as FileMaker Pro. A competitor's product was doing the same sync in under 10 seconds. The interesting part was that all records in the sync took much longer to process, once the images were added. In other words, the fact that I added some images to record #1 made records #2-100 take exponentially longer to process. So, I did some digging and found that the entire payload was being saved to a local script $variable in the Pull Payload script. When the payload included images, that $variable was really large; too large to all fit into FileMakers available memory. This caused the number of page faults to increase by about 600,000 while the records were being processed. My theory was that if I could reduce the amount of data store in memory (variables) at once, then I could reduce the time it takes to process a large payload. It turns out this basic concept worked. The sync which previously took over 5 min. now only took 30 seconds! (and page faults barely increased at all) I've attached a file with these changes that prove my theory. I ended up saving each segment returned from the server to a new record in the EasySync table, then only extracting a single record at a time from the payload, reading from each payload segment as necessary. NOTE: the attached file is a proof of concept ONLY! Please do not use it as-is in a production; it has not been tested and will probably fail in all but the most obvious circumstances. Also, my system crashed once while I was working on this file, so it's been closed improperly at least once. DO NOT USE IT IN PRODUCTION! I think I may start a fork of this project which includes this change; I'll post back here if I do. FM_Surveys_Mobile_v1r3_DSMOD.fmp124 points

-

2016 FMP icon Pack for Mac

4 points

-

Emerging Security Trends Affect FileMaker Platform

Emerging Trends in Information Security Affect FileMaker Platform By Steven H. Blackwell March 17th 2016 The recently concluded annual RSA Security Conference showcased a number of important emerging trends in Information Security that likely will affect FileMaker Platform developers and Administrators of FileMaker Platform systems. In this BLOG entry, I will describe some of these and offer some observations about how they might apply to the FileMaker Platform. Multi-Factor Authentication (MFA) will increasingly become a standard requirement for Identity and Access Management (I&AM) in organizations of all sizes. This is especially true for connectivity by mobile devices. And it especially true for data hosted in the Cloud. As we saw recently, efforts to create a “two-factor authentication” system inside of the FileMaker Pro client product did not work out well at all. (http://fmforums.com/blogs/blog/112-eye-on-filemaker/) A true MFA system will require coordinated integration with FileMaker Server, wherever that server resides. The data are still the key asset. Outer perimeter defenses, while important, are secondary to protecting the data from the inside out. The data are the asset we most seek to protect, wherever the data reside. For the most part, they reside inside of the database itself. That’s why finely-grained Privilege Sets, strong I&AM, Encryption At Rest, and Encryption In Transit are all so important for FileMaker Platform deployments. Insiders are the new malware. And now, everyone is an Insider. Whether by inadvertence, by curiosity, by carelessness, or by malicious intent, those persons inside organizations and inside organizational supply chains remain a principal threat vector for compromise of digital assets. Any number of major recent data breaches over the past year or so started in the organizational supply chain apparently. Context-sensitive and content-sensitive conditional authentication of identity assertions will become more and more common. What does this mean? A trusted insider accessing data from inside a corporate LAN may trigger one level of authentication requirement. That same user when attempting access from outside the LAN may trigger multiple steps (factors) of authentication requirements. Moreover, access to more sensitive data may require additional authentication factors. And when the context changes mid-session, additional authentication challenges may need to appear. This again will require close integration with FileMaker Server. The need for cyber-insurance will increase dramatically. To mitigate the liability associated with data breaches, more and more organizations of all sizes are going to need to acquire cyber-insurance. Premiums will continue to rise. Organizations of all types and sizes face liabilities such as damage to brand reputation, civil judgments in suits brought by persons whose data are compromised, business interruptions, and–dare I even say it—cyber-extortion. The underwriting process for this will require a more stringent adherence to a range of Best Practices by those seeking the insurance. Small and medium-sized businesses, a staple of the FileMaker community, are perhaps least well equipped to survive a major breach absent this insurance. Regulatory attention to security breaches will increase at both the Federal and State levels. Additionally there will be concomitant increases in scrutiny about whether organizations have employed “reasonable” security practices. What constitutes such practices is sometimes unclear; however, in any given instance, the list may be extensive. The California Attorney General’s Office recently noted that there were at least twenty specific items that any organization should presume to employ in order to meet the standard of “reasonable” security practices. (These are the Center for Internet Security’s Critical Security Controls. https://www.cisecurity.org/critical-controls.cfm) The Attorney General’s report notes that in 2015 approximately 60% of Californians were victims of a data breach of one sort or the other. And the data involved are often the most sensitive type information, including financial data and health-care records. California is often a leading-edge indicator for regulatory actions, and it is entirely to be expected that other states will follow suit here. (https://oag.ca.gov/breachreport2016) So, where does this leave the FileMaker platform and the FileMaker Developer Community? First, developers and administrators need to be sure they have properly aligned the security requirements of their systems to business requirements. This includes such items as brand reputation, customer/client data privacy, civil liability protection, regulatory compliance (State and Federal and international as applicable), and business continuity. I will be having much more to say about this is coming weeks. Second, developers need to follow Best Practices for security in FileMaker Platform files. This includes granular Privilege Sets, Encryption at Rest, and File Access Protection. Third, FileMaker Server Administrators also need to follow Best Practices for deployment, including appropriate OS for servers, a rigorous backup regimen including the tested ability to restore from backups, and Encryption in Transit. Fourth, business unit managers at FileMaker Platform customers need training in Security Best Practices from the user standpoint. Likewise, they should assure that their employees have a similar awareness. Fifth and finally, but certainly not least, we need to encourage FileMaker, Inc. to continue to improve the security schema of the Platform, most particularly the introduction of Multi-Factor Authentication (MFA) and the introduction of additional controls over the behavior of various external API’s. This includes Apple Events, Active X, Execute SQL, PHP, XML, FMPURL, and PlugIns.4 points

-

Placeholder auto-enter

4 pointsThis post is simply to make others aware of a usage of placeholder text that I haven't seen mentioned before. We have situation where, when opening a popover to create a new record, the new record isn't created until the User enters data. However, there are a few auto-enter fields which are defaults and they do not display. This is confusing to Users since, from their perspective, that data should already be there - they don't fill it in unless they want to change the value.! I always keep a field (global) called PLACEHOLDER. It is set to auto-enter ( replace ) with: If ( not IsEmpty ( Self ) ; "" ) and I use it for displaying calculation results on layouts when merge fields will not work but this usage is a bit different ... The PLACEHOLDER field can help with displaying these default auto-enters. No record has been created yet (and may not be if User cancels) but I have set those field's placeholder text to their auto-enter calculation and I've set the placeholder text style to same font color as their regular text. Now default entries display. BTW, this works nicely since when User enters the field, the value remains until the user starts to type, at which time the placeholder text disappears and the user entry displays.4 points

-

FileMaker Server - Primary/Standby

4 pointsThis feature is huge. Now that FileMaker 14 has been released here's the not-secret-anymore topic of my devcon presentation: Thursday, July 23 | FileMaker Developer Conference 20154 points

-

Ghost text techniques

4 pointsAnother fun option. If you want to get away from the overhead of the conditional formatting. Thanks to @jbante for posting this one. http://uxmovement.com/forms/why-infield-top-aligned-form-labels-are-quickest-to-scan/ I've been playing around with this idea for just over a week now. Noticing layouts built this way look less busy, but still provides all of the info a user needs to know what data in a field. Something that can be lost if the label is hidden. Google does this a lot in their interface ( hiding interface objects and menus ), to the point where it's not intuitive, and you can't find the magic spot for where the menu is hidden.4 points

-

FMForums Upgrade

4 pointsSo, I finally took the plunge and upgraded the site to the new forum engine. I am planning to work the weekend to restore site features that are currently disabled. There may be times when the site goes unresponsive or generates an error. I already have a few trouble tickets pending with the site developers to provide a remedy. I wish to thank the Site Advertisers for making this possible, but do please forgive the irony as I don't have all the ad spaces working just yet and I hope to restore them as soon as possible. - Having to crash course some html/php/css logic. Think I'll need another cuppa I hope to have some site instructions as things have changed a bit. One thing you may have noticed is that before we had usernames and Display Names the site only allowed one so we elected Display Names as they were already public facing - so you will need to login using your Display Name or your email address, or if already configured Facebook or Twitter (hope to include other oAuth login methods in the future ) I'll post more in this topic when there is something you should know.4 points

-

"Boolean" checkbox trouble

4 pointsI suggest that every act which could have been optimised but wasn't is poor practice. It is not poor practice because of principle but rather because of: If you let slide optimisation in this area on 2,500 records, the next time you'll be inclined to think letting slide with 3,000 records is fine. It is a slippery slope which breeds sloppiness and drags a good solution down into less than ideal. We can not always predict the environment and venue our solutions will be fired under. It may be fine over LAN but when accessed from an iPad with low-grade provider, the process which only took a blink over LAN now takes 4 seconds and THAT is a LONG time to a User. As for me, if I go to a website which takes more than 6 seconds to load, I move on. Users today will not tolerate slop. Compounded, each of the blow offs which were small, now each contribute to network traffic and begin to crawl. Do not give in. Optimize everything you do at all times. It is one of the few things you can control and it is the best investment in your solution. You may think you are only saving one second but multiply that over six months, performed by 25 users and you see clearly where this is going. Do not fall for the disease of sloppiness. Commit to excellence in your work. And yes, it is only my opinion because my opinion is the only one I have to share. And yes, I'm an obnoxious idealist. If that is the worst I am called, I'll take it.4 points

-

Grey background in layout mode

4 pointsYour "Maior Grid Spacing" is setted to 1 point. You need to change it to something like 10 or more. Or you need to unchek the box: View >> Grid >> Show Grid4 points

-

how to start with server if stupid

4 pointsCharity, Since the best experience is if the end user has all the data they need local to their iPad and not rely on connectivity you may try to do this: Create your solution in ONE file with multiple tables one table for your catalog another other table for your customer data entry. If your catalog only changes every so often that is fine you can push out a new version to the end user to replace their database with all the catalog data. If they only need to add new customers and not look up historical customers then you can essentially have them email data (export as TAB/CSV/or EXCEL ) from the customer table for you to import in to your own file, every night. (not ideal but its a work around) Since cost is a concern for you please look at this http://fmeasysync.com, its a free open source framework for syncing your database - it may be a bit overwhelming at first but I am confident that you can get there! - Charity every year at the developer conference we (old salts) wonder where the "next generation" of FileMaker Developers are coming from, if you have a passion for this and accept the challenges head on, you most likely will be reciprocating your learnt knowledge and wisdom in short time to other newbies jumping in to the fray. - What goes into the External Data Sources - really depends on where the files will ultimately be deployed, if two files are hosted then they would use relative paths: file:MyDatabse.fmp12 if one file is local to a device then it needs the fully qualified URL path with either the PUBLIC IP address, or a domain name, if using a 10.0.x.x or 192.168.x.x this is an internal IP address and wouldn't be accessible out side your environment. fmnet:/192.168.10.10/MyDatabase.fmp12 fmnet:/fms.somehostingcompany.com/MyDatabase.fmp12 if you would like to see how can be access when hosted please send me a private message and I could host a sample file for you that you can test with. Stephen4 points

-

Display theme in Manage Layouts

4 pointsI know this is lower priority than some of the other wishes but I can't help but just mention it ... If we go to Manage > Themes, we see a list of themes loaded in this file and it also indicates how many layouts are assigned to that theme. But it would be nice if we knew which layouts were assigned to a theme and even had the ability to ... yeah I know ... probably never ... but to GTRR (so-to-speak) to those layouts or see a list of them at least. Manage > Layouts certainly has room to list its theme along with the table and menu set. If nothing else, this would make life easier for us. When a solution has 150 layouts, it can take quite a bit of time to go through them all to find the few layouts assigned to a theme needlessly. And each theme (even themes unused), load all definitions and take up quite a bit of space. For instance, a recent example - file had 13 themes but on 3 were used. By converting them all to the single theme, I saved 1.7MB right off the top and it took 15 minutes. So a way to quickly jump to layouts according to their theme would be helpful. Or ... am I missing something here? Are there ways of seeing a list of layout names per theme? Thanks everyone for listening and hopefully providing a solution of which I was unaware. Otherwise I'll provide FileMaker feedback about it. Also, there is a Custom Themes forum for FM12 but there isn't one for FM13. It might be nice to remove the 'FM12' portion from the forum title so we can put FM13 questions there as well ... or ... create another forum for Custom Themes in FM13. I prefer the former. Thank you!!4 points

-

Script Management Best Practice

4 pointsIt's perfectly find, even preferable, to keep your process split out into separate scripts. There are plenty of arguments for this from different sources, but I personally think that the best reason is that human working memory is one of the biggest bottlenecks in our ability to write software. It's dangerous to put more functionality in one script (or calculation, including custom functions) than you can keep track of in your head. If you find yourself writing several comments in your scripts that outline what different sections of the script do to help you navigate the script, consider splitting those sections of the script into separate sub-scripts, using the outline comments for the sub-script names. If your parent scripts start to read less like computer code and more like plain English because you're encapsulating functionality into well-named sub-scripts, you're doing something right. (The sub-scripts don't have to be generalized or reusable for this to be a useful practice. Those are good qualities for scripts, but do the encapsulation first.) I think you'd be better off if the scripts shared information with script parameters and results instead of using global variables, if you can help it. When you set a global variable, you have to understand what all your other scripts will do with it; and when you use the value in a global variable, you have to understand all the other scripts that may have set it. This has the potential to run up against the human working memory bottleneck very fast. With script parameters and results, you only ever have to think about what two scripts do with any given piece of information being shared: the sub-script and its parent script. Global variables can also lead to unintended consequences if the developer is sloppy. If one script doesn't clear a variable after the variable is no longer needed, another script might do the wrong thing based on the value remaining in that global variable. With script parameters, results, and local variables, the domain of possible consequences of a programming mistake are contained to one script. I might call it a meta best practice to use practices that limit the consequences of developer error. Globals are necessary for some things; just avoid globals if there are viable alternative approaches. There is a portability argument for packing more functionality into fewer code units — fewer big scripts might be easier to copy from one solution into another than more small scripts. Big scripts are also easier to copy correctly, since there are fewer dependencies to worry about than with several interdependent smaller scripts that achieve the same functionality. For scripts, this is often easy to solve with organization, such as by putting any interdependent scripts in the same folder with each other. Custom functions don't have folders, though. For custom functions, another argument for putting more in fewer functions is that one complicated single function can be made tail recursive, and therefore can handle more recursive calls than if any helper sub-functions are called. This ValueSort function might be much easier to work with if it called separate helper functions, but I decided that for this particular function, performance is more important.4 points

-

How to prevent "scripting error (401)" in server log?

Do an ExecuteSQL to do a quick select, if that comes up empty you can skip the real FM find. It's important to understand though that "Set Error Capture On" does not prevent the error from *happening*. The only thing it does is hide the error from the user so that you can handle it silently. If you run the same script in your debugger you will see that FM also reports the error.4 points

-

Server script on computer startup

4 pointsThe trick is to use the fmsadmin command line and that syntax is the same on Windows and Mac. So the only challenge is in calling that fmsadmin command line from your favourite OS scripting language: shell script / AppleScript on OSX and batch file / VBscript / PowerShell on Windows. Below is a sample VBscript that automates shutting down FMS in a safe way. It should contain enough pointers to do the reverse. ' Author: Wim Decorte ' Version: 2.0 ' Description: Uses the FileMaker Server command line to disconnect ' all users And close all hosted files ' ' This is a basic example. This script is not meant as a finished product, ' its only purpose is as a learning & demo tool. ' ' This script does not have full Error handling. ' For instance, it will break if there are spaces in the FM file names. ' The script also does not handle infinite loops in disconnecting clients ' or closing files. ' ' This script is provided as is, without any implied warranty or support. Const WshFinished = 1 q = Chr(34) ' the " character, needed to wrap around paths with spaces '-------------------------------------------------------------------------------------------- ' Change these variables to match your setup theAdminUser = "" theAdminPW = "" pathToSAtool = "C:Program FilesFileMakerFileMaker ServerDatabase Serverfmsadmin.exe" '-------------------------------------------------------------------------------------------- SAT = "cmd /c " & q & pathToSAtool & q & " " ' watch the trailing space callFMS = SAT If Len(theAdminUser) > 0 Then callFMS = callFMS & " -u " & theAdminUser End If If Len(theAdminPW) > 0 Then callFMS = callFMS & " -p " & theAdminPW End If listClients = callFMS & " list clients" disconnectClients = callFMS & " disconnect client -y" listfiles = callFMS & " list files -s" closeFiles = callFMS & " close file " stopServer = callFMS & " stop server -y -t 15" ' hook into the Windows shell Set sh = WScript.CreateObject("wscript.shell") ' get a list of all clients and force kick them off clientIDs = getCurrentClients() clientCount = UBound(clientIDs) ' loop through the clients and kick them off If clientCount > 0 Then fullCommand = disconnectClients Set oExec = sh.Exec(fullCommand) ' give FMS some time and then requery the list of clients Do Until oExec.Status = WshFinished WScript.Sleep 50 Loop Do Until clientCount = 0 WScript.Sleep 1000 Debug.WriteLine "Waiting for clients to disconnect..." clientIDs = getCurrentClients() clientCount = UBound(clientIDs) Loop End If ' get list of files and close them fileIDs = getCurrentFiles() fileCount = UBound(fileIDs) ' loop through the files and close them If fileCount > 0 Then Do Until fileCount = 0 fullCommand = closeFiles & fileIDs(0) & " -y" Set oExec = sh.Exec(fullCommand) ' give FMS some time and then requery the list of files Do Until oExec.Status = WshFinished WScript.Sleep 50 Loop fileIDs = getCurrentFiles() fileCount = UBound(fileIDs) Loop End If ' all clients and files stopped ' shut down the database sever (does not stop the FMS service!) fullCommand = stopServer Set oExec = sh.Exec(fullCommand) Do Until oExec.Status = WshFinished WScript.Sleep 50 Loop ' done, exit the script Set sh = Nothing WScript.Quit ' ------------------------------------------------------------------------------ Function getCurrentClients() tempCount = 0 Dim tempArray() Set oExec = sh.Exec(listClients) ' in case there are no clients... If oexec.StdOut.AtEndOfStream Then Redim temparray(0) ' read the output of the command Do While Not oExec.StdOut.AtEndOfStream strText = oExec.StdOut.ReadLine() strText = Replace(strtext, vbTab, "") Do Until InStr(strtext, " ") = 0 strText = Replace (strtext, " ", " ") Loop If InStr(strText, "Client ID User Name Computer Name Ext Privilege") > 0 OR _ InStr(strText, "ommiORB") > 0 OR _ InStr(strText, "IP Address Is invalid Or inaccessible") > 0 Then ' do nothing Redim temparray(0) Else tempClient = Split(strtext, " ") tempCount = tempCount + 1 Redim Preserve tempArray(tempCount) tempArray(tempCount-1) = tempClient(0) End If Loop getCurrentClients = tempArray End Function Function getCurrentFiles() tempCount = 0 Dim tempArray() Set oExec = sh.Exec(listfiles) ' in case there are no files... If oexec.StdOut.AtEndOfStream Then Redim temparray(0) ' read the output of the command Do While Not oExec.StdOut.AtEndOfStream strText = oExec.StdOut.ReadLine() strText = Replace(strtext, vbTab, "") Do Until InStr(strtext, " ") = 0 strText = Replace (strtext, " ", " ") Loop If InStr(strText, "ID File Clients Size Status Enabled Extended Privileges") > 0 OR _ InStr(strText, "ommiORB") > 0 OR _ InStr(strText, "IP Address Is invalid Or inaccessible") > 0 OR _ Left(strtext, 2) = "ID" Then ' do nothing Redim temparray(0) Else tempFile = Split(strtext, " ") status = LCase(tempFile(4)) If status = "normal" Then tempCount = tempCount + 1 Redim Preserve tempArray(tempCount) tempArray(tempCount - 1) = tempFile(1) & ".fp7" End If End If Loop getCurrentFiles = tempArray End Function4 points

-

ExecuteSQL as custom function?

4 pointsWhy not load all their information into one global variable and then you can always parse out that info since you should only ever have one row returned. ExecuteSQL ( "SELECT ID, firstname, lastname, title, date_hire FROM Staff WHERE accountName = ?" ; "¶" ; "" ; Session::accountName ) Then you can use GetValue ( ) to parse out specific information.4 points

-

No records found = 401 = Filemaker script error

This is kind of janky, but you have to insert in a script step that guarantees no error code thrown (like "Show All Records") and then exit the script using the "Exit Script" step. ie. If Get(lastError) > 0 action for failed find Show All Records Exit Script Else action for successful find End If If you have multiple "Perform Find"s, make sure you add the "If Get(lastError) > 0" right after the Perform Find and then "Show All Records" and "Exit Script" steps after your other script steps but before the "End If" or "Else" script step. This suppresses the error in the schedule section of FMS4 points

-

"Strongest" way to pass multiple parameters.

The two main schools of thought for passing multiple script parameters are return-delimited values and name-value pairs — the choice is a matter of taste, whether you prefer array or dictionary data structures. Both approaches have solutions for dealing with delimiters in the data. For return-delimited values, you can use the Quote function to escape return characters, and Evaluate to extract the original value: this standard. As long as you have a function to define a name-value pair in a parameter (# ( name ; value)) and another function to pull specific values back out again (#Get ( parameters ; name )), it works, and all variations should behave the same way. Any other supporting functions are purely for convenience, and probably can be re-implemented for whatever representation syntax you want. So I'd write: Quote ( "parameter 1") & ¶ & Quote ( "parameter 2¶line 2" ) will give you the parameter: "parameter 1" "parameter 2¶line 2" and you can retrieve the parameters: Evaluate ( GetValue ( Get ( ScriptParameter ) ; 2 ) ) with the result: parameter 2 line 2 Every name-value pair approach worth using has it's own approach for escaping delimiters. In my own opinion, XML-style syntax is unnecessarily verbose. My personal preference when working with name-value pairs these days is "FSON" syntax (think "FileMaker-native JSON"), which is where the parameters are formatted according to the variable declaration syntax of a Let function: $parameter1 = "value 1"; $parameter2 = "value 2¶line2"; I only consider name-value pair functions practical when the actual syntax is abstracted away with custom functions. Once you do that, the mechanics of working with the different types should be interchangeable, such as any name-value pair function set that matches # ( "parameter1" ; "value 1" ) & # ( "parameter2" ; "value 2¶line 2" ) and: #Get ( Get ( ScriptParameter ) ; "parameter2" ) I only have 2 requirements for any parameter-passing syntax: 1. It can encode it's own delimiter syntax as data in a parameter, and therefore any text value (already mentioned in this thread), and 2. it can arbitrarily nest parameters, i.e., I can include multiple sub-parameters within a single parameter. The requirements tend to work hand-in-hand with each other. If I can do those two things, I can construct everything else I will ever need within that framework.4 points

-

Conditional format drop-down calendar?

Hi Charity, We all deal with wanting to eliminate the display of buttons, checkboxes, drop-down calendars and such on that last row when Allow Creation is in effect. Instead of having to eliminate the objects individually, you can accomplish it all with conditional formatting AND make it clear to your User that they click the last row to add a new record. Here is how: Create a text box with the words [ Add new record here ... ] Make the background transparent. Color of text does not matter Resize the text box to same size as single portal row Format the text box as follows (using conditional formatting) First entry: Formula = not IsEmpty ( the primary key from the portal table occurrence ). Then below select ‘more formatting’ and set the font size to custom and 500. Second entry: Formula = IsEmpty ( the primary key from the portal table occurrence ). And below set the text to white and the background to black as example. You can set it anything you wish. Select this text box and Arrange > Bring to Front and place it over your top portal row. This one text box hides checkboxes, drop-down calendars, buttons, lines on empty fields, etc only on the empty row and also provides a clean row in which to provide your message. I use it on all Allow Creation portals - just by changing the ID referenced within the conditional format portion.4 points

-

Changing Dimensions of Layout Parts Dynamically

@LaRetta shared this sample file with me @comment and I really liked the concept so I played around with it a little. I was able to accomplish the same result with a single layout, which means I'd be more likely to use this technique myself. I also added layout objects so I could measure the various part sizes rather than having to keep a layout size and script config in sync; this is optional and only my preference for maintenance reasons, though. @IlliaFM Since you just mentioned a footer, I added that to my sample file as well. If you try to use my alternate implementation of this technique, be aware there are two fields layered on top of each other that probably only look like one field at a glance. This doesn't solve the other issues @comment just mentioned, though. MeasureBeforeDisplayDS.fmp123 points

-

Sale Date within date range results in "x" Quarter

Here's a table showing what happens at each step, for each possible value of Month(), starting with September: Month ( SalesDate ) 9 10 11 12 1 2 3 4 5 6 7 8 Month ( SaleDate ) - 9 0 1 2 3 -8 -7 -6 -5 -4 -3 -2 -1 Mod ( Month ( SaleDate ) - 9 ; 12 ) 0 1 2 3 4 5 6 7 8 9 10 11 Div ( Mod ( Month ( SaleDate ) - 9 ; 12 ) ; 3 ) 0 0 0 1 1 1 2 2 2 3 3 3 As you can see, any month in the first quarter will now have the value of 0, the next 3 months will return 1, and so on. All that remains is to add 1 and prepend the Q character to get the expected Q1 to Q4 numbering. --- Conceptually, there are two steps taking place here: Renumber the months so that September is moved to the beginning of the year; Divide them into groups of 3.3 points

-

Calculating Depth for Bench Seating

3 points

-

SQL SLOW

3 pointsNot sure in what form you need the results. Here's your file back with two global fields that contain a list of unique skus and the count of that field (set by script). DEMOv2.fmp123 points

-

Forum ettiequte - your help is appreciated.

Let's all get along? It's been rare for our forums, but on occasions I have had to step in to keep the peace and harmony of the site. At times personalities clash - and the typed repsonses are often done in haste - and the sort of thing that wouldn't be said if we were all in same room together. I am encouraging everyone to re-read https://fmforums.com/guidelines/ our guidelines. Let's please keep threads on topic. - and not abuse the "reporting" system. Please be courteous of others and if you have offended someone please extend a sincere apology. The least thing I enjoy doing is to put people in 'timeout' or ban them from the site. Thank you.3 points

-

Getting order information from Amazon Merchant Services

OK - I did it myself. Over a couple weekends of spare time, I wrote a few dozen scripts that interface between Filemaker and the Amazon Marketplace Web Service API. Sad to say, this is non-trivial. Amazon's documentation is poor: there are a surprising number of mistakes, misspellings, and obsolete/outdated sections. The one bright spot is the Amazon MWS Scratchpad, which provides a direct sandbox to allow testing different calls. Most of the documentation and online help is aimed at PHP & Java & Python developers; it's not too difficult to map this into Filemaker land. I wrote scripts to gather my orders from Amazon Market Place, and to send confirmations to buyers. Like most API's, there are many gotcha's. Here's a few hints, mainly to save time for others in my position. 1) A simple Filemaker WebViewer is *not* going to work, unless you do screen-scrapes from the Amazon MWS Scratchpad. 2) I used cURL to communicate with Amazon Web Service via POST. The information to be POSTed is the Amazon parameter string (which includes the hashed signature). The Monkeybread Software Filemaker functions (www.mbsplugins.eu) are just the ticket. 3) The outgoing information must be signed with a SHA256 hash. Again, this is available in the Monkeybread software functions. Use MBS("Hash.SHA256HMAC"; g_AWS_Secret_Key; g_AWS_Text_to_Sign_With_NewLines_Replaced; 1) 4) Getting the correct SHA256 signature requires very strict observance of line-feeds ... carriage return / linefeeds in OS X and Filemaker will cause a bad signature. Before making the SHA256 hash, do a complete replace so as to generate only Unix-style line-feeds. Within the "canonical parameter list" there aren't any linefeeds (but there are linefeeds before that list) 5) The Amazon API demands ISO-8601 timestamps, based on UTC. Filemaker timestamps are not ISO-8601, but there are several custom functions to generate these. The Filemaker function, get(CurrentTimeUTCMilliseconds), is very useful, but its result must be converted into ISO-8601. Notice that Amazon UTC Timestamps have a trailing "Z" ... when I did not include this, the signatures failed. 6) The Amazon API must have url encoded strings (UTF-8). So the output from the SHA256 hash must be converted to URL encoding -- again, the Monkeybread software comes through (use MBS function Text.EncodeToURL) 7) The Amazon "canonical parameter list" must be in alphabetical order, and must include all of the items listed in the documentation. 8) Errors from Amazon throttling show up in the stock XML return, but check for other errors returned by the cURL debug response. At minimum, search through both responses by doing a filemaker PatternCount (g_returned_data; "ERROR") 9) When you're notified of a new Amazon order, you must make two (or more) cURL calls to Amazon MWS: 1) first, do cURL request to "list orders", which will return all the Amazon Order Numbers (and some other info) for each order since a given date/time. 2) Then, knowing an order number, you do another cURL to get all the details for a given order. Repeat this step for every new Amazon order number. Each of these calls requires your Amazon Seller_ID, Marketplace_ID, Developer_Account_Number,_Access_Key_ID, and your AWS_Secret_Key. Each also requires a SHA-256 hash of all this information along with the "canonical parameter list" If anyone wants my actual scripts, drop a note to me (At this moment, my scripts do the all-important /orders /list orders and /get order. I'm almost finished writing a /feeds filemaker API to send confirmations. I probably won't build scripts to manage inventory or subscriptions, but once you've built a framework for the scripts, it's not difficult to expand to more functionality) Best of luck all around, -Cliff Stoll3 points

-

Portal Sorting with FM12

3 pointsHere is a demo of how easy portal sorting can be done in FM12. This demo uses the calculation that Tom Fitch provided a while ago with his portal sorting technique but one can use the PositionValue custom function as well. Portal_sort_fast12.zip3 points

-

Multi-part composite serial number for items

I don't see anything "unusual" in this numbering scheme. This question comes up quite often: you have a parent-child relationship, and you want to number the children of each parent with its own series (prepending the other digits representing the parent ID and the year/month is trivial). Usually, the idea is to take the maximum existing value in the series (available either from the point-of-view of the parent, or through a self-join relationship of the child table based on matching parent ID), and add 1 to that. Which will work 99.9% of the time - until two users create records roughly at the same time and end up with duplicate numbers. So the first "challenge" is to prevent such situation - and it could be accomplished by (a) forcing the users to create new child records by script only, and (b) having the script lock the parent record first and, failing to do that, abort the task. However, the "challenge" doesn't end there: you must also consider what will happen if user decides that the parent selected initially is not the right one and needs to be changed to another. Instead of dealing with this, let me also point out that if any record is deleted, you will have a gap in the series anyway. So the real question I would ask here is this: is the goal worth the means required to achieve it?3 points

-

Extract Email id's with matching domain from list of values

I would say that the idea is to append something to the values that do not end with the specified suffix. This is done in three steps: Protect the values that do end with the specified suffix from being modified in step 2 by substituting the suffix and the subsequent return with a reserved character (a); Substitute all remaining returns with a reserved character (b) and a return; this effectively appends (b) to all values that do not end with the specified suffix; Reverse step 1 . The result of this is indeed what you show in your post: [email protected] [email protected](b) [email protected] [email protected](b) [email protected] and when this is used to filter the original list, only the unmodified, wanted values remain. IIRC, I developed this idea out of something that Agnès Barouh did, I don't recall exactly what that was.3 points

-

SVG Icon Manager

3 pointsI did a video with Richard Carlton http://filemakervideos.com/filemaker-14-svg-icon-helper-tool-filemaker-14-training/ about SVG files and created a small helper tool. In that video, I explain why I set the fill color to white, so that the icons appear nicely in the FM icon palette. You can see in the helper tool, how I did it and change to whatever you like, since it is completely free and unlocked.3 points

-

Overriding calculated values

3 pointsMake EndDate auto-enter a calculated value, replacing existing value = Case ( Get ( ActiveFieldTableName ) & "::" & Get ( ActiveFieldName ) = GetFieldName ( Self ) ; Self ; StartDate + Duration ) Make Duration auto-enter a calculated value, replacing existing value = Case ( Get ( ActiveFieldTableName ) & "::" & Get ( ActiveFieldName ) = GetFieldName ( Self ) ; Self ; EndDate - StartDate ) Alternatively, this could be solved by script triggers.3 points

-

Conditionally prevent edit of field contents

I use 'Hide Object When'. Make two instances of the same field, One is editable, the other not. Give them complementary conditions for hiding and place them on top of each other. The non-enterable field is hidden when the value not = "No". The enterable field is hidden when the value = "No". Easy in FM 13...3 points

-

Count records in related table and display in portal

Define the self-join as: Runs::idEntries_fk = Runs 2::idEntries_fk AND Runs::idDates_fk ≥ Runs 2::idDates_fk where Runs 2 is a new occurrence of the Runs table. Then define a calculation field (result is Number) = Count ( Runs 2::idRuns ) This will return the number of times the entry has appeared in the Runs table before (and including) the current week. Note that the count is discontinuous: if an entry appeared for 3 weeks, then missed 2 weeks and then reappeared for 4 weeks, the result will be the total 7 weeks.3 points

-

Finding Unused Styles

3 pointshttp://www.rcconsulting.com/downloads.html At the bottom is Layout Optimizer. Not affiliated with RCC at all.3 points

-

Find-mode Symbols (=, etc.) and Text-field Index Usage

It's been a long time since I have tested this, and it's always good to test again in order to see what optimizations have newer versions brought. What I see (in version 11) is this: Perform Find [ Criteria: Data::Indexed: “$searchPhrase” ] - 1 second the first time after the file is opened; subsequent finds are instant. Perform Find [ Criteria: Data::Indexed: “==$searchPhrase” ] - 5 seconds the first time after the file is opened; subsequent finds take between 1 to 2 seconds. Perform Find [ Criteria: Data::Unindexed: “==$searchPhrase” ] - 5 seconds. Draw your own conclusions.3 points

-

All entry points

3 pointsOK, first things first. 1. Someone needs to make an assessment of the level of sensitivity of the data that will be in these files and the level of adverse impact to the organization if a breach occurs. That assessment can guide your decisions about the level of security needed. 2. Create in each file a Privilege Set for each role that will be using the system. Assign that role the privileges it needs. 3. When #2 is completed--and it can be an on-going process--you can create Accounts for each person who will access the files. Those Accounts can be internal to the files, or they can be externally authenticated by Active Directory, Open Directory, or local server groups. Each Account is assigned to one of the Privilege Sets you created in #2. THis is done directly in the file for internal Accounts, or through matching Groups for external authentication. 4. This system then propagates through to all client types: FIleMaker Pro, FileMaker Pro, and WebDirect.™ When a user is challenged for credentials, the user enters his/her Account name and password. if authenticated, the user has access to the files with the privileges granted in Step #2. Finally, two other items. If your server is not robust, you won't be using WebDirect for more than 4 or 5 users. Larger WebDirect deployments require very robust servers. I would also recommend that you get someone who knows FileMaker Server and FileMaker security to come into your organization and give you some assistance before you deploy all of this. It sounds as if your situation is complex, and if you don;t do this correctly at the outset you're going to be plagued with issues. Steven3 points

-

Version 12 icon for Mac users

3 pointsWell version 13 has been release but it is the same icon as v12. Since you can run multiple version on the Mac a visual distinction always helps. I have attached a new 'FM12DApp.icns' file to put into the apps content / resources folder. After you replace it you can 'get info' on the app, select the icon in the top left corner, then copy / paste to refresh the new icon. FM12DApp-blue.zip FM12DApp-green.zip FM12DApp-orange.zip3 points

-

Compare a field to all entries of a value list

Try = not IsEmpty ( FilterValues ( Scope::FieldName ; ValueListItems ( Get (FileName); "ValueListName" ) ) )3 points

-

ExecuteSQL Custom Function

3 pointsFileMaker Server will try to execute the SQL query on the server so you will only get the result. If that result is a record set of thousands of records then obviously that is what FMS will have to send you. There is one exception to FMS doing the query for you: if you have an open record in your session for the target table then FMS will send you the whole table so that your client can perform the query including the results of your open record. That is usually not a problem unless there are more than say 10,000 records in the target table. And the wait becomes exponentially longer the more records in the target table. An example: Table with 1,000,000 records. You construct a SQL query that results in 1 record. You have no open records in the target table (if other people have open records, that does not matter) --> FMS will do the query and return you the data for the one record. And it will be fast Table with 1,000,000 records. You construct a SQL query that results in 1 record. You DO have open records in the target table (if other people have open records, that does not matter) --> FMS will send you the data for ALL 1,000,000 records and your local copy of FMS will execute the SQL query. It will be very slow.3 points

-

Serial Number Field based on the current year and a serial no.

The challenge comes when on 1 January the year rolls over and the serial number resets to 1, several projects are created AND THEN somebody wants to create a project and back-date it to the previous year. It can be done if you are smart and use a table to store the project numbers: each record is a year, with a field to store the next project number. As projects are created the serial number is incremented. When a new year is started, a new Year record is created and the serial numbers for this year are tracked. The previous year's serial numbers are still remembered. If somebody wants to create a project for last year, just look up that year's record and increment that serial number.3 points

-

working out the number of working days between 2 dates, when your weekend is NOT Sat-Sun

Try: RegularDays = Let ( [ n = EndDate - StartDate + 1 ; w = Div ( n ; 7 ) ; r = Mod ( n ; 7 ) ; d = DayOfWeek ( StartDate ) ; o = Case ( r ; ( d + r > 6 ) + ( d + r > 7 ) ) ] ; 5 * w + r - o ) WeekendDays = Let ( [ n = EndDate - StartDate + 1 ; w = Div ( n ; 7 ) ; r = Mod ( n ; 7 ) ; d = DayOfWeek ( StartDate ) ; o = Case ( r ; ( d + r > 6 ) + ( d + r > 7 ) ) ] ; 2 * w + o )3 points

This leaderboard is set to Los Angeles/GMT-07:00